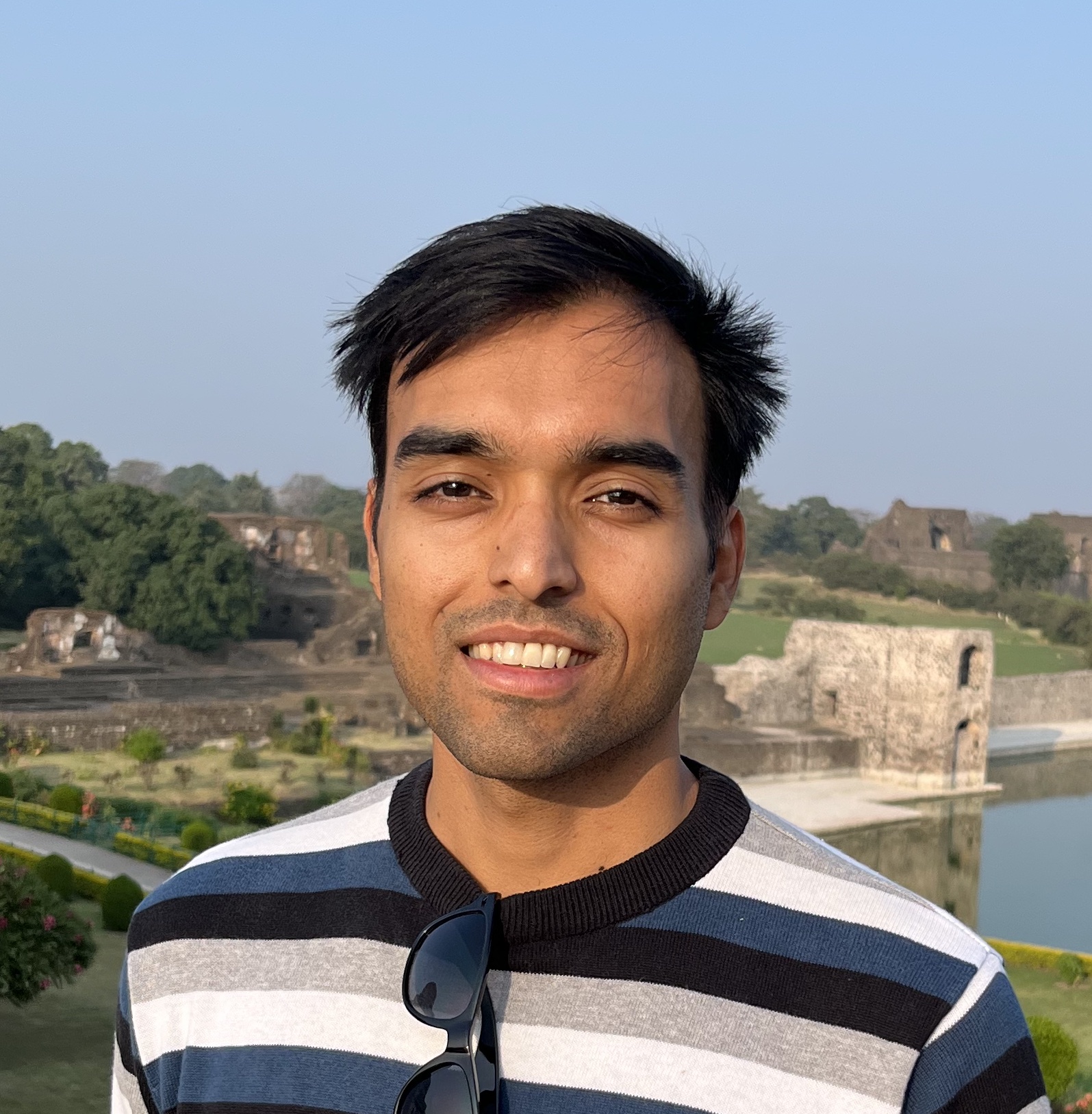

निरुपम गुप्ता / Nirupam Gupta

I am a Tenure-Track Assistant Professor in the ML Section of the Department of Computer Science at University of Copenhagen (DIKU). Before joining DIKU, I was a Postdoctoral Researcher in the School of Computer Science at EPFL (Switzerland) and the Department of Computer Science at Georgetown University (USA). I obtained my PhD from University of Maryland College Park (USA) and my Bachelor's degree from Indian Institute of Technology (IIT) Delhi (India).

Research. I work primarily on distributed machine learning (a.k.a. federated learning) algorithms, focusing on the challenges of robustness and privacy. To learn about the basics of robustness in the context of distributed learning, check out my latest book Robust Machine Learning: Distributed Methods for Safe AI. I have several ongoing projects on these topics. Some of these projects are highlighted here below.

Teaching. I teach Privacy in Machine Learning (PriMaL) and Machine Learning B (MLB) at DIKU. PriMaL is offered in the Fall semester, while MLB is offered in the Spring semester. Both these courses are offered in a hybrid format, and support fully remote participation. For more details, such as course registration, check out this website for ML courses at DIKU.

"It seems complex only because of ignorance; otherwise everything is simple." - OSHO (The Book of Secrets)